How to Evaluate AI Visibility Tools

The AI visibility category is new and fragmented. Tools vary wildly in approach, data quality, and usefulness. Here is a framework for evaluating them — including where Gumshoe fits.

Published Jun 2025 · Updated Mar 2026

The Four Types of AI Visibility Tools

Not all tools in this space solve the same problem. Understanding the categories helps you evaluate what you actually need:

SEO tools adding AI features

Traditional SEO platforms that have bolted on AI tracking. Typically keyword-based, shallow coverage, and limited to 1-2 models. Better than nothing, but treats AI search like traditional search with a different interface.

Browser scraping monitors

Tools that automate browser sessions to capture AI responses. Fragile (breaks when UIs change), non-compliant (violates terms of service), and data quality is polluted by personalization and caching artifacts.

API-based monitoring platforms

Tools that access AI models through official APIs. Clean data, compliant, stable. The best platforms combine API access with persona-driven testing and statistical aggregation. This is where Gumshoe sits.

AI content optimization tools

Tools focused on making your content more AI-friendly. Useful for action, but they do not monitor your actual visibility. You need measurement before you can optimize effectively.

7 Questions to Ask When Evaluating Tools

1. How many AI models do they track?

AI visibility varies dramatically across models. A tool tracking only ChatGPT misses 80% of the picture. Look for coverage across ChatGPT, Gemini, Claude, Perplexity, DeepSeek, Grok, and AI Overviews at minimum.

Red flag: "We track ChatGPT" with no mention of other models.

2. API access or browser scraping?

API access produces clean, reliable, compliant data. Scraping violates terms of service, produces data polluted by personalization, and breaks when providers change their UI. Read about why this matters.

Red flag: Vague language about "proprietary technology" without stating API access.

3. Keyword-driven or persona-driven?

Keyword-based tools ask generic questions. Persona-driven tools simulate how real buyers ask AI for recommendations — with role, industry, and evaluation context. The same question asked by a CTO and a freelancer produces entirely different AI responses.

Red flag: No mention of personas, buyer context, or prompt variation.

4. Do they track citation sources?

Citations are the most actionable data in AI visibility. Knowing which sources AI models cite when recommending competitors tells you exactly where to focus. A tool without citation tracking gives you scores without explaining them.

Red flag: Visibility scores with no insight into why.

5. Can they do competitive benchmarking?

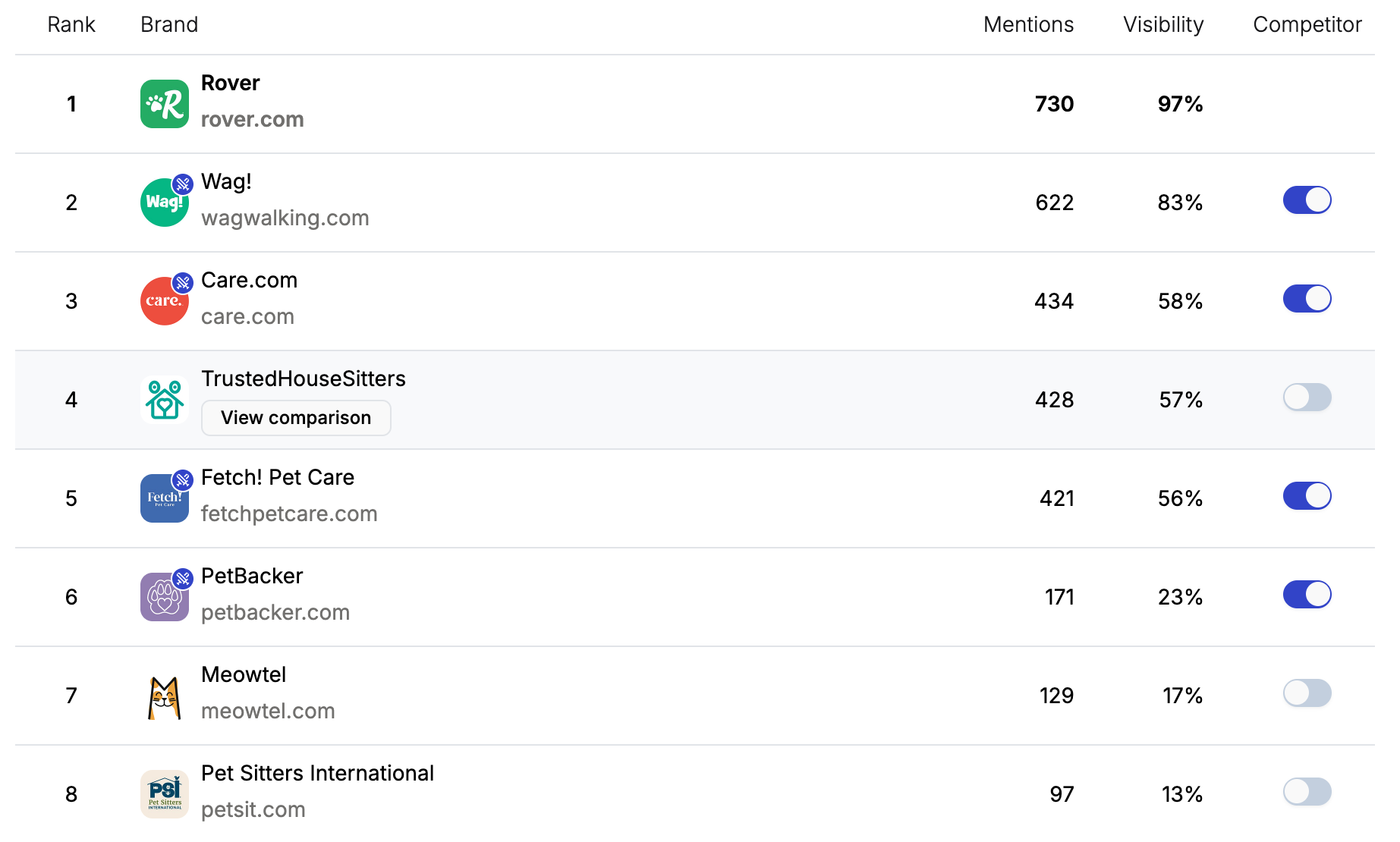

Your visibility exists in a competitive context. A tool that shows your score without showing your competitors' scores is only half useful. Look for brand leaderboards and competitive share of voice.

Red flag: No competitive data or manual-only competitor tracking.

6. Do they support scheduled monitoring?

AI visibility changes over time as models are updated and your content landscape shifts. One-time reports are a starting point. Ongoing scheduled monitoring is how you measure the impact of your GEO efforts and catch competitive shifts early.

Red flag: One-time analysis with no trend tracking.

7. How do they handle non-determinism?

AI answers are probabilistic. A tool that shows you a single AI response as "your visibility" is misleading. Look for statistical approaches that aggregate across many unique prompts to produce reliable visibility percentages.

Red flag: Screenshot-based "proof" or single-response reporting.

How Approaches Compare

| Capability | SEO Bolt-ons | Browser Scrapers | API-based Platforms |

|---|---|---|---|

| Multi-model coverage | 1-2 models | 2-4 models | 11+ models |

| Data compliance | Varies | ToS violations | Fully compliant |

| Persona-driven testing | No | Rarely | Yes |

| Citation tracking | No | Limited | Yes |

| Competitive leaderboards | Basic | Manual | Automated |

| Non-determinism handling | None | None | Statistical |

| Data reliability | Low-Medium | Low | High |

The Tradeoffs Nobody Talks About

Every tool makes tradeoffs. Here are the ones that matter and that most vendors will not tell you about:

Coverage vs. depth. Tracking 11 models with persona-driven prompts is expensive. Some tools cut costs by tracking fewer models or using generic prompts. The data is cheaper but less useful.

Speed vs. reliability. Scraping is fast. API access is slower but produces clean, auditable data. If you need results in seconds, scraping tempts. If you need results you can trust, API is the only path.

Simplicity vs. actionability. A simple "your visibility is 42%" dashboard is easy to understand but hard to act on. Deeper tools that show persona-level breakdowns, citation sources, and competitive positioning require more effort to interpret but produce far more actionable insights.

Where Gumshoe Fits

Gumshoe is an API-based, persona-driven AI visibility monitoring platform. We track 11 models, use official APIs exclusively, generate persona-driven prompts, provide citation tracking and competitive leaderboards, and support scheduled monitoring.

What we do well: deep, reliable measurement with actionable data. What we do not do: content optimization (we measure and recommend; you execute). We are transparent about this because measurement and action are different problems, and conflating them leads to worse outcomes for both.

Read the full methodology for details on how we handle data quality, non-determinism, and compliance.

See what rigorous AI visibility data looks like

Run a free report and evaluate the data quality for yourself. Your first 3 reports are free.

Get Started FreeStop guessing. Start measuring.

See how AI models describe your brand across ChatGPT, Gemini, Claude, Perplexity, and more.

Free to start · No credit card required